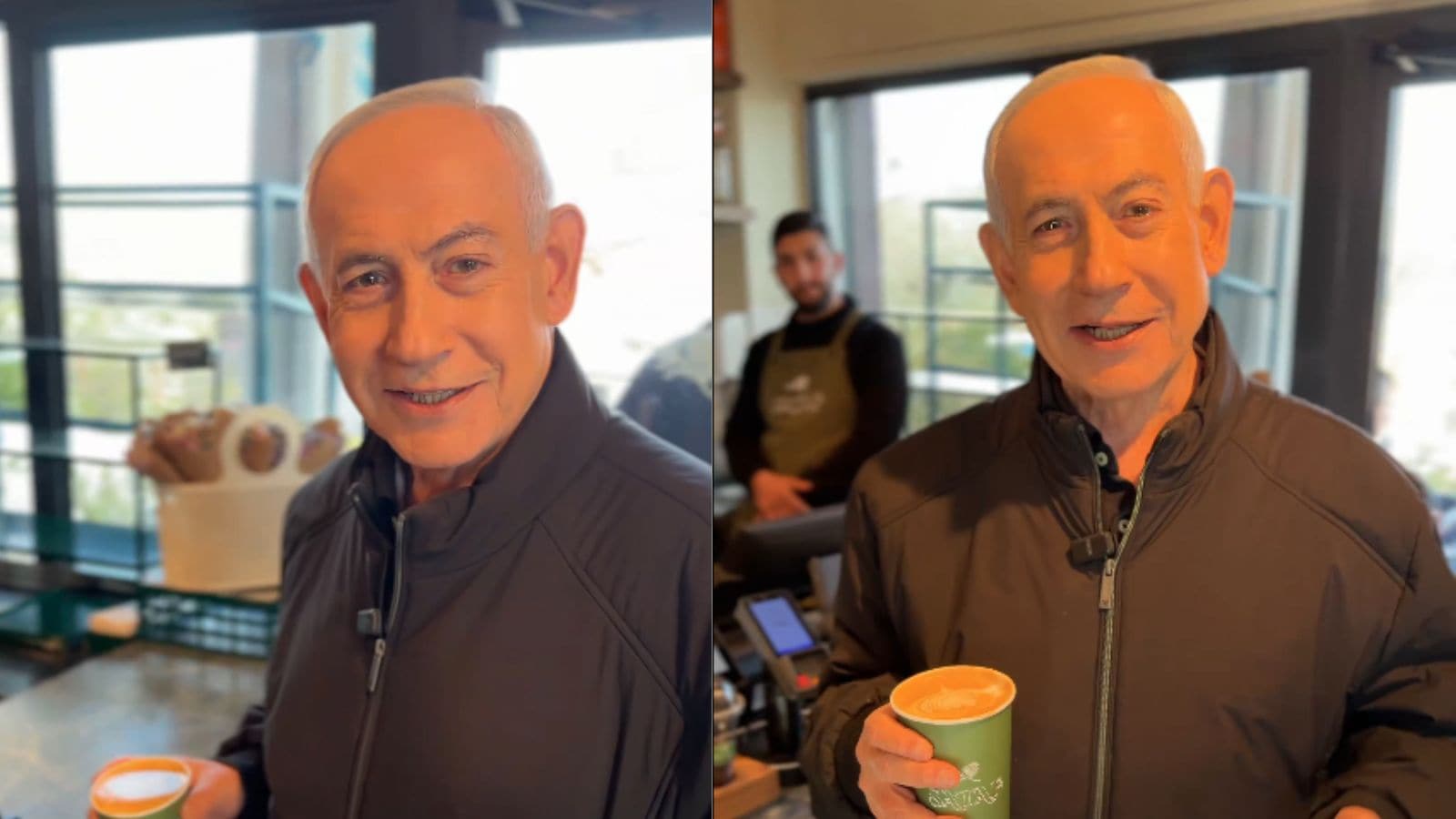

The convergence of algorithmic amplification and political volatility has transformed the "proof of life" protocol from a routine verification into a high-stakes arena of semiotic combat. When Israeli Prime Minister Benjamin Netanyahu released a video addressing rumors of his death and biological anomalies—specifically the "six fingers" conspiracy theory—the event served as a case study in the mechanics of modern disinformation. This incident reveals the structural vulnerabilities of the digital information ecosystem, where the burden of proof is shifted from the accuser to the accused, creating a circular logic that traditional communication strategies fail to disrupt.

The Triad of Digital Delegitimation

The spread of misinformation regarding a head of state's physical health or biological reality operates through three distinct tactical layers. Understanding these layers is required to analyze why a simple video response often fails to provide a definitive resolution. For a closer look into this area, we recommend: this related article.

- Biological Dehumanization: By introducing the claim of "six fingers" (polydactyly), the narrative moves beyond political critique into the realm of the "uncanny valley." This shifts the public perception of the target from a political actor to a biological anomaly or a digital fabrication.

- The Dead Internet Hypothesis: The accusation that a leader is deceased and replaced by a CGI avatar or a body double leverages the growing public awareness of Generative Adversarial Networks (GANs). As deepfake technology approaches parity with reality, the threshold for "sufficient evidence" of life becomes unreachable for a skeptical audience.

- Algorithmic Arbitrage: Disinformation agents exploit the engagement metrics of social media platforms. Because "proof of life" content generates high-frequency interactions—denials, fact-checks, and shares—the platform algorithms prioritize the reach of the original lie to maximize dwell time.

Verification Bottlenecks in the Post-Truth Era

The Prime Minister's attempt to debunk these rumors by holding a cup of coffee and engaging in casual dialogue utilizes a "humanization through mundane action" framework. However, the efficacy of this strategy is limited by several technical and psychological bottlenecks.

The Resolution Paradox

In a digital environment, higher video resolution does not necessarily correlate with higher trust. In fact, professional-grade lighting and 4K resolution are often cited by conspiracy theorists as evidence of a "studio-controlled environment," whereas low-quality, "leaked" footage is perceived as more authentic. This creates a strategic dilemma: government communications teams must choose between professional polish (which risks looking fake) and raw aesthetics (which risks looking unpresidential). For additional context on the matter, in-depth analysis is available at USA Today.

Latency as a Weapon

The time gap between the emergence of a rumor and the official response is a critical variable in the "truth-decay" function. In the case of Netanyahu's response, the delay allowed the "six fingers" narrative to achieve a high enough level of saturation that any subsequent video was viewed through a confirmation-bias lens. Viewers were no longer looking for the message; they were scanning the frames for digital artifacts or anatomical inconsistencies.

The Mechanics of Anatomical Disinformation

The specific claim of "six fingers" is a calculated choice in information warfare. Unlike claims of secret heart surgeries or hidden illnesses, which require medical expertise to debate, anatomical anomalies are "visually verifiable" by laypeople. This democratizes the conspiracy, allowing any user with a smartphone to become a "forensic analyst."

- Motion Blur Artifacts: In compressed video formats, rapid hand movements often produce ghosting or blurring. These standard digital artifacts are frequently misinterpreted by non-technical audiences as "glitches in the matrix" or evidence of poor AI rendering.

- Compression Loss: Low-bitrate streaming reduces the detail in complex textures like skin or hair. When a hand moves across a dark background, the lack of data can cause digits to appear merged or duplicated, providing the visual "proof" required to sustain the narrative.

Strategic Communication in High-Friction Environments

To counter these sophisticated disinformation cycles, a shift from "reactive denial" to "preemptive transparency" is necessary. The current model of responding to specific rumors—like the coffee video—only serves to validate the rumor as a topic worthy of high-level discussion.

A more robust strategy involves the implementation of Cryptographic Proof of Authenticity. Rather than relying on visual cues, digital communications can be embedded with C2PA (Coalition for Content Provenance and Authenticity) metadata. This allows a viewer to verify the camera source, the time of recording, and any subsequent edits through a decentralized ledger. Without this technical backbone, visual evidence remains subjective and susceptible to the "liar’s dividend," where the mere possibility of fakery is enough to discredit the truth.

The Cost Function of Defensive PR

Every time a head of state responds to a fringe conspiracy theory, they incur a "dignity cost." This is a quantitative measure of how much political capital is spent to address low-quality information. If the Prime Minister responds to a "six fingers" rumor, he signals that the rumor has reached a critical mass. This creates a feedback loop where bad actors are incentivized to create increasingly absurd claims to force a response from the state apparatus.

- Resource Misallocation: Intelligence and PR teams are diverted from policy communication to debunking biological myths.

- Over-Exposure: Constant presence on camera to prove "vitality" can lead to audience fatigue, diminishing the impact of genuine policy announcements.

- Validation of Fringe Platforms: By addressing rumors that originate on fringe social media, the state inadvertently elevates those platforms to the status of a "legitimate source" that requires official attention.

Cognitive Load and the Erosion of Nuance

The human brain is poorly equipped to handle the volume of contradictory visual data produced during these cycles. When a user sees two images—one showing a normal hand and one showing a "glitched" hand—the cognitive load required to determine the source of the glitch is often too high. Most users default to "truth-agnosticism," where they stop caring what is real and instead focus on which narrative aligns with their existing political tribe.

This erosion of nuance is the ultimate goal of the disinformation actor. The objective is not necessarily to make people believe the Prime Minister has six fingers; it is to make the public believe that nothing they see on a screen can be trusted. Once the baseline of shared reality is destroyed, the state loses its ability to govern by consent, as every directive is viewed as a potential fabrication.

The path forward for state actors requires a move away from "performance" and toward "provenance." Instead of a video of a leader drinking coffee to prove they are alive, the state must build a persistent, verified digital identity that uses multi-factor authentication for all public communications. This removes the "forensic analysis" from the hands of the untrained public and places it within a verifiable technical framework. The era of believing one's eyes is over; the era of verifying the hash has begun.

The strategic imperative now lies in the rapid deployment of blockchain-verified media streams. Governments that fail to adopt cryptographic standards for their public addresses will find themselves perpetually trapped in a defensive posture, fighting a war of attrition against an endless stream of algorithmically generated hallucinations. The only way to win a game of digital "proof of life" is to change the rules of evidence entirely.

Would you like me to develop a blueprint for a cryptographic media verification protocol that government agencies could implement to combat deepfake-related disinformation?